Mar 17, 2026

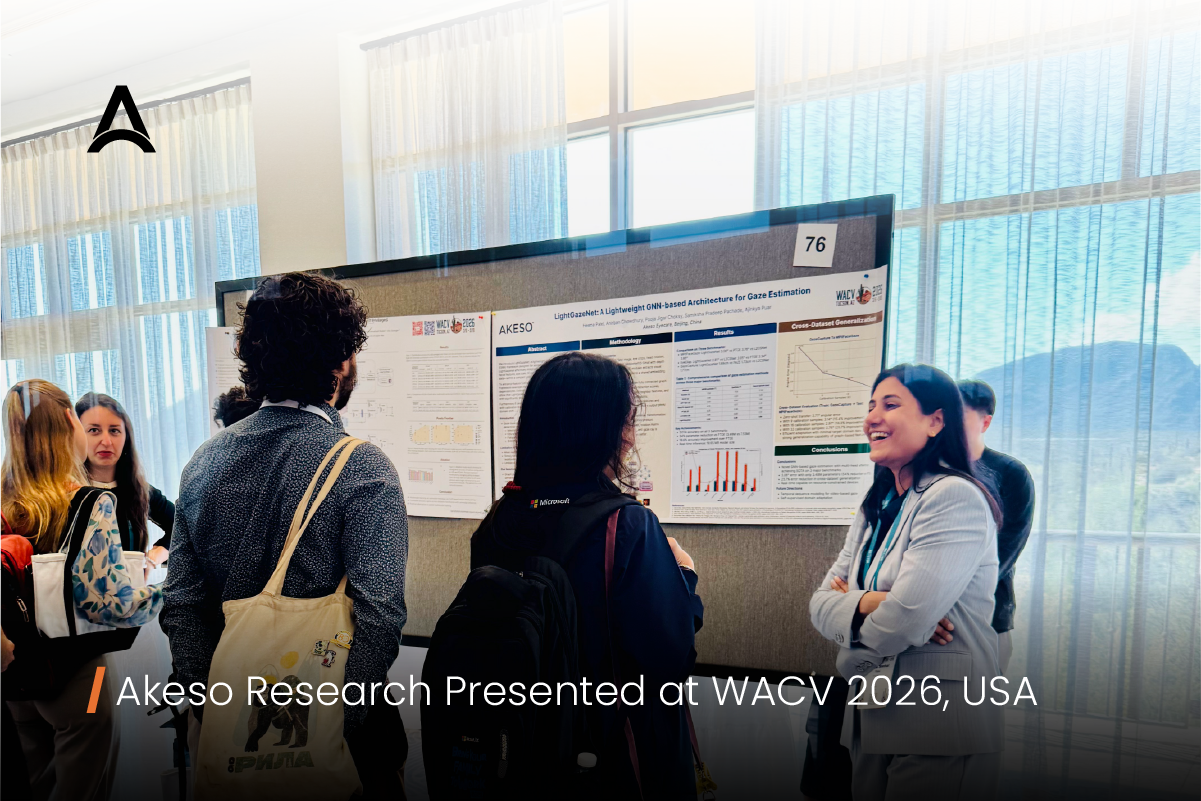

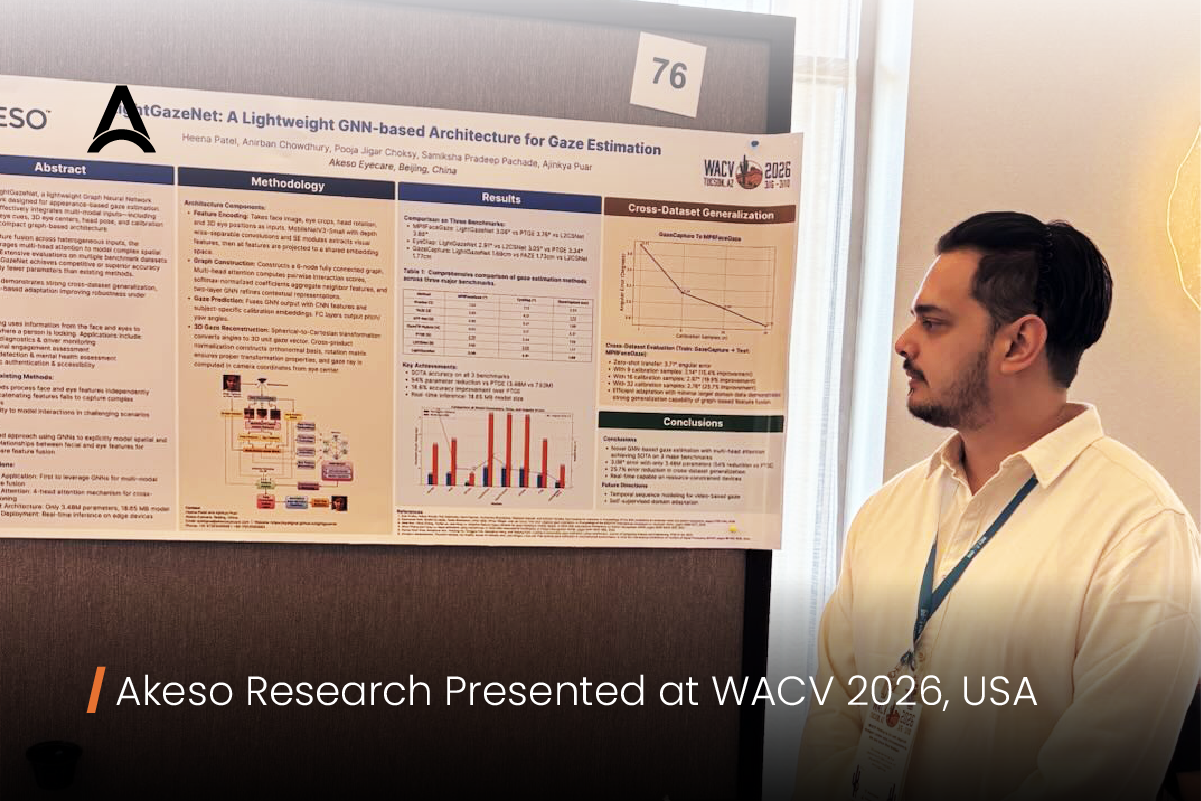

Akeso is proud to share that our team presented the research paper “LightGazeNet: A Lightweight GNN-based Architecture for Gaze Estimation” at WACV 2026 held in Tucson, Arizona, United States. Representing Akeso at the conference, Dr. Heena Patel and Ajinkya Puar shared insights from our ongoing research in gaze estimation with the global computer vision community.

LightGazeNet introduces a lightweight graph neural network architecture designed for appearance-based gaze estimation, focusing on achieving a balance between predictive accuracy and computational efficiency. The model integrates multiple visual cues, including facial features, eye regions, head pose, and calibration information, to better capture the relationships between these signals. By modeling these interactions within a graph-based framework, LightGazeNet aims to improve gaze prediction while maintaining the efficiency required for real-world deployment.

Efficient gaze estimation plays an increasingly important role in applications such as human–computer interaction, augmented reality interfaces, assistive technologies, and intelligent vision systems. LightGazeNet explores how graph neural networks can help make gaze estimation models both accurate and lightweight, supporting practical use across a wide range of devices and interactive environments.

Read more about the research paper here: https://lnkd.in/g5NK8bDU

Authors:

Dr. Heena Patel, Ajinkya Puar, Anirban Chowdhury, Pooja Choksy, Samiksha Pachade, Ph.D.